P.G. Tello, S. Kauffman

BioSystems Volume 258, December 2025, 105618

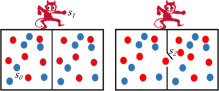

This work revisits the Maxwell Demon paradigm to explore its implications for evolutionary dynamics from an information-theoretic perspective. By removing the Demon as an intentional agent, we reinterpret the emergence of order as a natural outcome of physical laws combined with stochastic processes. Using models inspired by information theory, such as binary and Z-channels, we show how random fluctuations (e.g., stochastic resonance) can decrease entropy, generate mutual information, and induce non-ergodicity. These dynamics highlight the role of memory and correlation as emergent features of purely physical interactions without recourse to purposeful agency. In this framework, evolutionary exaptations, rather than sole adaptations, emerge as key drivers of biological evolution. Finally, we connect our analysis with recent contributions on agency and memory, underscoring the relevance of informational concepts for understanding the purposeless yet structured dynamics of evolutionary processes.

Read the full article at: www.sciencedirect.com